Introduction

Artificial Intelligence is no longer confined to massive cloud data centers. A new paradigm is emerging Edge AI, where intelligence moves closer to where data is actually generated.

At MyFluiditi, we see this shift not as a trend, but as a fundamental transformation in how modern digital systems are designed. Businesses are moving from centralized intelligence to distributed, real-time decision-making systems and Edge AI is at the core of this evolution.

In simple terms, Edge AI allows devices like smartphones, sensors, cameras, and machines to process data locally instead of sending it to the cloud.

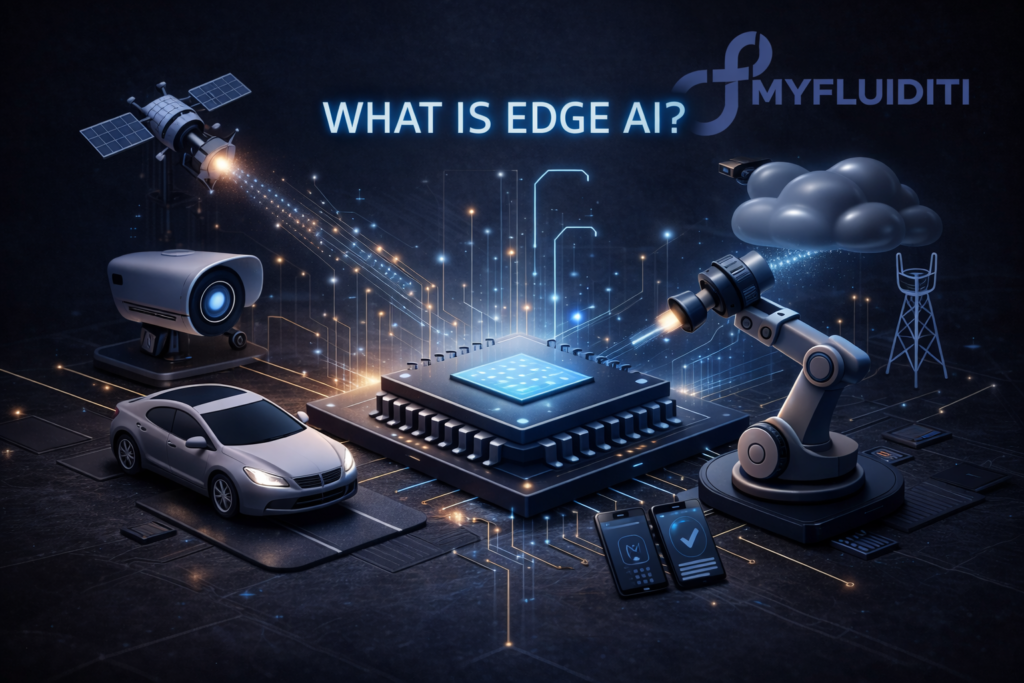

What is Edge AI?

Edge AI refers to deploying AI models directly on edge devices—closer to the source of data rather than relying entirely on centralized cloud infrastructure.

Traditionally:

- Data → sent to cloud → processed → response returned

With Edge AI:

- Data → processed locally → instant action

This architectural shift enables real-time insights, faster decisions, and reduced dependency on connectivity.

At MyFluiditi, we implement this as part of a hybrid AI architecture, where:

- The edge handles real-time inference

- The cloud handles training and large-scale intelligence

Why Edge AI Matters Today

The rise of IoT, smart devices, and real-time applications has exposed the limitations of cloud-only AI.

Key limitations of traditional cloud AI:

- Latency delays

- High bandwidth usage

- Privacy risks

- Dependency on internet connectivity

Edge AI addresses all of these challenges by bringing computation closer to the user.

As industries demand instant decision-making, Edge AI becomes not optional—but essential.

How Edge AI Works

At a technical level, Edge AI operates through three core layers:

1. Data Collection

Devices like sensors, cameras, or IoT systems capture real-world data.

2. Local Processing (Inference)

Pre-trained AI models run directly on the device to analyze data instantly.

3. Action or Insight

The system takes immediate action without needing cloud communication.

Unlike cloud AI, which focuses heavily on training, Edge AI primarily focuses on inference, which is lighter and optimized for speed.

At MyFluiditi, we optimize models to run efficiently even on low-power edge devices, ensuring scalability across industries.

Key Benefits of Edge AI

⚡ 1. Ultra-Low Latency

Processing happens locally, eliminating round-trip delays to the cloud.

🔒 2. Enhanced Data Privacy

Sensitive data stays on-device, reducing exposure risks.

📶 3. Reduced Bandwidth Costs

No need to continuously send large datasets to servers.

🔄 4. Offline Capability

Systems continue functioning even without internet connectivity.

⚙️ 5. Improved Reliability

Critical systems (like autonomous machines) can operate independently of network failures.

At MyFluiditi, these advantages translate into faster, more resilient, and cost-efficient AI systems for our clients.

Real-World Use Cases of Edge AI

Edge AI is already transforming multiple industries:

🚗 Autonomous Vehicles

Real-time decision-making for navigation and safety.

🏭 Smart Manufacturing

Predictive maintenance and defect detection on production lines.

🏥 Healthcare

On-device diagnostics and medical imaging analysis.

🛍️ Retail

Smart cameras for customer behavior analytics and security.

📱 Consumer Devices

Face recognition, voice assistants, and personalization features.

These use cases highlight a common requirement: real-time intelligence with minimal delay.

Edge AI vs Cloud AI

| Aspect | Edge AI | Cloud AI |

|---|---|---|

| Processing Location | Local device | Centralized servers |

| Latency | Very low | Higher |

| Connectivity | Optional | Required |

| Privacy | High | Moderate |

| Scalability | Distributed | Centralized |

The reality is not “Edge vs Cloud” it’s Edge + Cloud working together.

At MyFluiditi, we design hybrid AI ecosystems that balance both for optimal performance.

Challenges of Edge AI

Despite its advantages, Edge AI comes with engineering complexities:

- Limited hardware resources (CPU, memory, power)

- Model optimization requirements

- Device management at scale

- Security at distributed endpoints

This is where companies struggle not in building AI, but in deploying and scaling it efficiently.

MyFluiditi addresses this by:

- Building lightweight AI models

- Creating edge-ready architectures

- Enabling seamless cloud-edge synchronization

The Future of Edge AI

Edge AI is moving from experimental to mainstream adoption.

With advancements in:

- AI chips and NPUs

- 5G connectivity

- IoT ecosystems

We are entering a phase where intelligence becomes embedded in everything.

The future is not centralized AI systems but distributed intelligence networks.

How MyFluiditi Helps You Build Edge AI Solutions

At MyFluiditi, we don’t just build AI we build deployable, scalable intelligence systems.

We help businesses:

- Design edge-first architectures

- Optimize AI models for real-world environments

- Reduce infrastructure costs

- Deploy AI faster without heavy hiring dependecies

Our focus is simple:

Make AI practical, scalable, and production-ready.

Conclusion

Edge AI represents a fundamental shift in computing from centralized processing to real-time, on-device intelligence.

For businesses, this means:

- Faster decisions

- Lower costs

- Better user experiences

And for forward-thinking companies like MyFluiditi, it’s an opportunity to lead the next wave of AI innovation.